How Discord scaled search from billions to TRILLIONS of messages 🔍

Creative Full Stack Web Developer with 3+ years of experience. Love open source and learning new things every day. Quick learner and believes in hard work

Ever used the search bar in Discord to find an old message?

Maybe you searched "meeting link" in your work server. Or tried to find that meme someone shared 2 years ago. Or looked for a conversation with a friend from months back.

That simple search bar is querying trillions of messages across millions of servers. And recently, Discord completely rebuilt how it works.

Let me break down what happened.

First, Some Context

What happens when you search on Discord?

When you type "project deadline" in a Discord server:

Your query hits Discord's search infrastructure

It finds the right partition (called an "index") for that server

Scans through all messages to find matches

Returns results sorted by relevance

Simple enough. But now imagine doing this for:

200+ million monthly active users

Millions of servers

Some servers with billions of messages

That's a different beast entirely.

What is Elasticsearch and Lucene?

Discord uses Elasticsearch for search. But what is it?

Think of it like this:

Lucene is the core search engine - the actual code that scans through text, builds search indexes, and finds matching documents. It's like the engine of a car.

Elasticsearch is a wrapper around Lucene that makes it distributed and scalable. It handles splitting data across multiple servers, replication, and coordination. It's like the car itself - steering wheel, transmission, everything that makes the engine usable.

When you search on Discord, Elasticsearch receives your query and uses Lucene under the hood to find matches.

What is "indexing"?

Every message you send on Discord needs to be indexed - converted into a searchable format.

Raw message: "Hey, are we meeting tomorrow at 3pm?"

Indexed format: The words "hey", "meeting", "tomorrow", "3pm" get stored in a special data structure that makes them fast to search.

Without indexing, Discord would have to scan every single message character by character. With indexing, it can jump directly to messages containing your search terms.

What is "bulk indexing"?

Discord doesn't index messages one by one. That would be painfully slow.

Instead, they collect messages in batches (say, 50 at a time) and index them together. This is bulk indexing.

Analogy:

❌ Going to the post office 50 times for 50 letters

✅ Going once with all 50 letters

Way more efficient.

How Discord's Old System Worked

Here's the flow when you sent a message:

You send message

↓

Message enters Redis queue (waiting line)

↓

Worker picks up 50 messages from queue

↓

Worker bulk-indexes all 50 to Elasticsearch

↓

Messages stored across different nodes

Discord had 2 large Elasticsearch clusters with 200+ nodes each. Different Discord servers lived on different nodes.

This worked fine for years. Until it didn't.

The Problems

Problem 1: One Bad Node = 40% Failure Rate

Here's where things got ugly.

When a worker picked up 50 messages, those messages belonged to different Discord servers. So they went to different Elasticsearch nodes:

Batch of 50 messages:

├── Message 1 (Gaming server) → Node 23

├── Message 2 (Study group) → Node 87

├── Message 3 (Friend's DM) → Node 45

├── Message 4 (Work server) → Node 12

└── ... spread across ~50 nodes

Now, what happens if Node 45 crashes?

Elasticsearch marks the entire batch as failed. All 50 messages go back to the queue to retry.

Even though 49 out of 50 operations succeeded, one failure meant everything retried.

Let's do the math:

Discord had ~100 nodes

Batch touches ~50 random nodes

Probability of at least one node being down ≈ 40%

So a single node failure caused 40% of all indexing operations to fail. Those failed batches went back to the queue, creating more load, causing more failures. A vicious cycle.

Problem 2: Redis Dropped Messages Permanently

Because so many batches were failing and retrying, the Redis queue got massively backed up.

Redis is an in-memory database. It's incredibly fast, but it has limits. When millions of messages piled up:

Redis CPU maxed out trying to manage the queue

To survive, Redis started dropping messages

Those messages were lost forever

The result? Some messages were never indexed. Users would search for something they definitely said, and it wouldn't show up. Because it was never made searchable.

Problem 3: Lucene's 2 Billion Document Limit

Remember Lucene, the search engine under Elasticsearch?

It has a hard limit: maximum 2 billion documents per index.

This sounds like a lot. But Discord servers like:

Fortnite Official

Minecraft

Large gaming/streaming communities

These servers have billions of messages. They hit the limit.

When that happened, new messages in that server stopped being indexed. Search completely broke for their largest communities.

Discord's temporary fix? Work with the Safety team to find spam servers and delete them to free up space. Not exactly a scalable solution.

Problem 4: Couldn't Do Software Updates

Because the system was so fragile (one node down = 40% failure), Discord couldn't do rolling restarts.

Normally, you update software by:

Take down Node 1, update it, bring it back

Take down Node 2, update it, bring it back

Repeat for all nodes

But with Discord's system, taking down even one node caused chaos.

When the Log4Shell vulnerability hit (a critical security issue affecting most Java applications), Discord had to take the entire search system offline to patch it.

Other companies patched in minutes. Discord needed a maintenance window.

Problem 5: No Cross-DM Search

This one affected users directly.

Messages were organized (sharded) by server or DM channel. All messages from "Gaming Server" lived together. All messages from your DM with Alex lived together.

But what if you wanted to search across all your DMs?

"Where's that flight confirmation someone sent me?"

Discord would need to query every single DM channel you've ever had. If you've messaged 200 people, that's 200 separate queries. Too slow and expensive.

So cross-DM search simply didn't exist.

The Solutions

Discord didn't just patch these issues. They rebuilt the entire system.

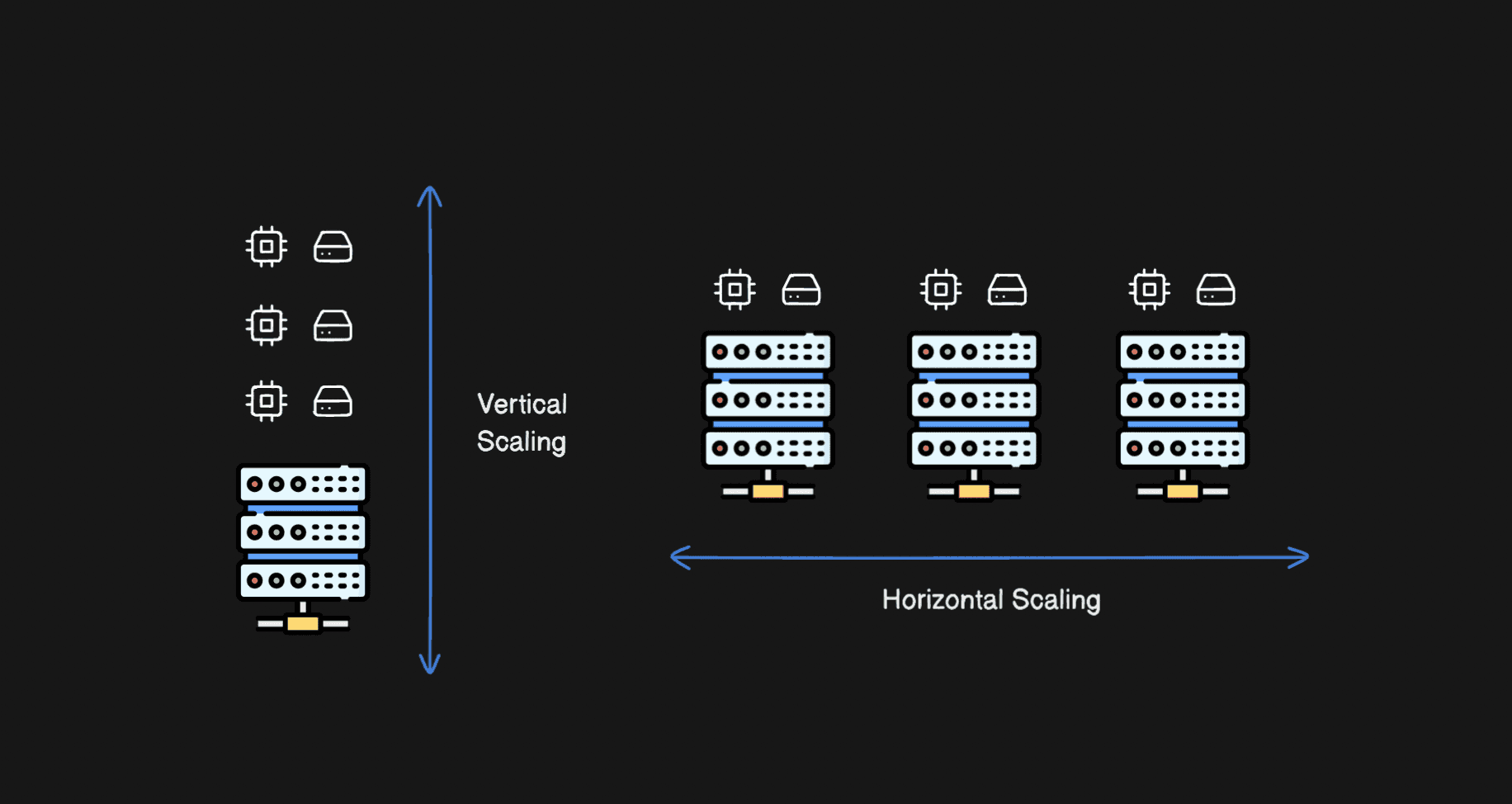

Solution 1: Cell Architecture (40 Small Clusters)

Old: 2 massive clusters with 200+ nodes each

New: 40 smaller clusters grouped into "cells"

Each small cluster has:

3 Master nodes - coordinate the cluster (one per availability zone)

3+ Ingest nodes - receive and preprocess data (stateless, can scale up/down)

Multiple Data nodes - store the actual messages (replicated across zones)

Why is this better?

If one cluster has problems, 39 others are completely unaffected. The blast radius of any failure is now much smaller.

It's like having 40 small libraries instead of 2 giant ones. One library floods? The other 39 still work fine.

Solution 2: Smart Batching by Destination

Old: Batch 50 random messages → send to 50 different nodes → one failure kills the batch

New: Group messages by destination before batching

Messages for Node 23: [msg1, msg4, msg12, msg38] → Batch A

Messages for Node 45: [msg2, msg7, msg29] → Batch B

Messages for Node 87: [msg3, msg15, msg41, msg50] → Batch C

Now if Node 45 crashes:

Batch A ✅ succeeds

Batch B ❌ fails and retries

Batch C ✅ succeeds

One node failure only affects that node's messages. Not 40% of everything.

Discord built a "Message Router" in Rust that:

Streams messages from the queue

Groups them by destination (cluster + index)

Spawns a separate task for each destination

Each task collects and bulk-indexes its own messages

Solution 3: Redis → Google PubSub

Old: Redis queue drops messages when overwhelmed

New: Google PubSub with guaranteed delivery

PubSub is a managed message queue service. Its key property: messages are never dropped.

If indexing slows down, messages wait in the queue. They might wait for minutes or hours. But they're never lost.

The tradeoff: during failures, search results might be delayed. But they'll never be permanently missing.

Solution 4: BFGs Get Dedicated Clusters

BFG = Big Freaking Guilds (Discord's internal term 😄)

For servers approaching the 2 billion limit, Discord now:

Creates a dedicated cluster just for that server

Uses multiple shards instead of one (spreads data across multiple Lucene indexes)

Migrates messages with zero downtime

The migration flow:

Step 1: Create new index with 2x shards

Step 2: Dual-write (new messages go to OLD and NEW index)

Step 3: Backfill historical messages to NEW index

Step 4: Switch queries to NEW index

Step 5: Stop writing to OLD index

Step 6: Clean up OLD index

Users notice nothing. Search keeps working throughout.

This is similar to how Stripe does zero-downtime database migrations - dual-write, backfill, switch, cleanup.

Solution 5: DMs Sharded by User

Old: DMs sharded by channel

DM with Alex → Index A

DM with Jordan → Index B

DM with Sam → Index C

Searching across all DMs = querying A + B + C + hundreds more

New: DMs sharded by user

- All YOUR DMs → Your personal index

Searching across all DMs = querying ONE index

This required re-indexing every DM message. But since Discord was already rebuilding everything, they took the opportunity.

Now you can search "flight confirmation" and find it instantly, no matter who sent it.

Key Takeaways

1. Reduce blast radius

The old system had a tiny blast radius for each failure (one node), but the impact was massive (40% of operations). The new system contains failures to small, isolated areas.

2. Never drop data

Switching from Redis to PubSub meant accepting slower recovery for guaranteed durability. That's almost always the right tradeoff.

3. Batch intelligently

Batching is great for performance. But batch by destination, not randomly. This prevents one bad destination from affecting everything.

4. Plan for outliers

Most Discord servers are small. But the huge ones (BFGs) need special handling. Design your system to handle the 99% efficiently while having escape hatches for the 1%.

5. Migrations can enable features

Discord had to re-index everything anyway. They used that opportunity to re-shard DMs by user, enabling a completely new feature (cross-DM search).

Wrapping Up

Discord's search infrastructure went from "barely holding together" to "trillions of messages, 5x faster."

The key insight: the best systems aren't ones that never fail. They're ones where failure doesn't cascade.

One node failing should be that node's problem. Not a 40% catastrophe.

That's resilience engineering.

Full technical details: Discord Engineering Blog